Why UC Law SF?

#1

More California judges than any other law school

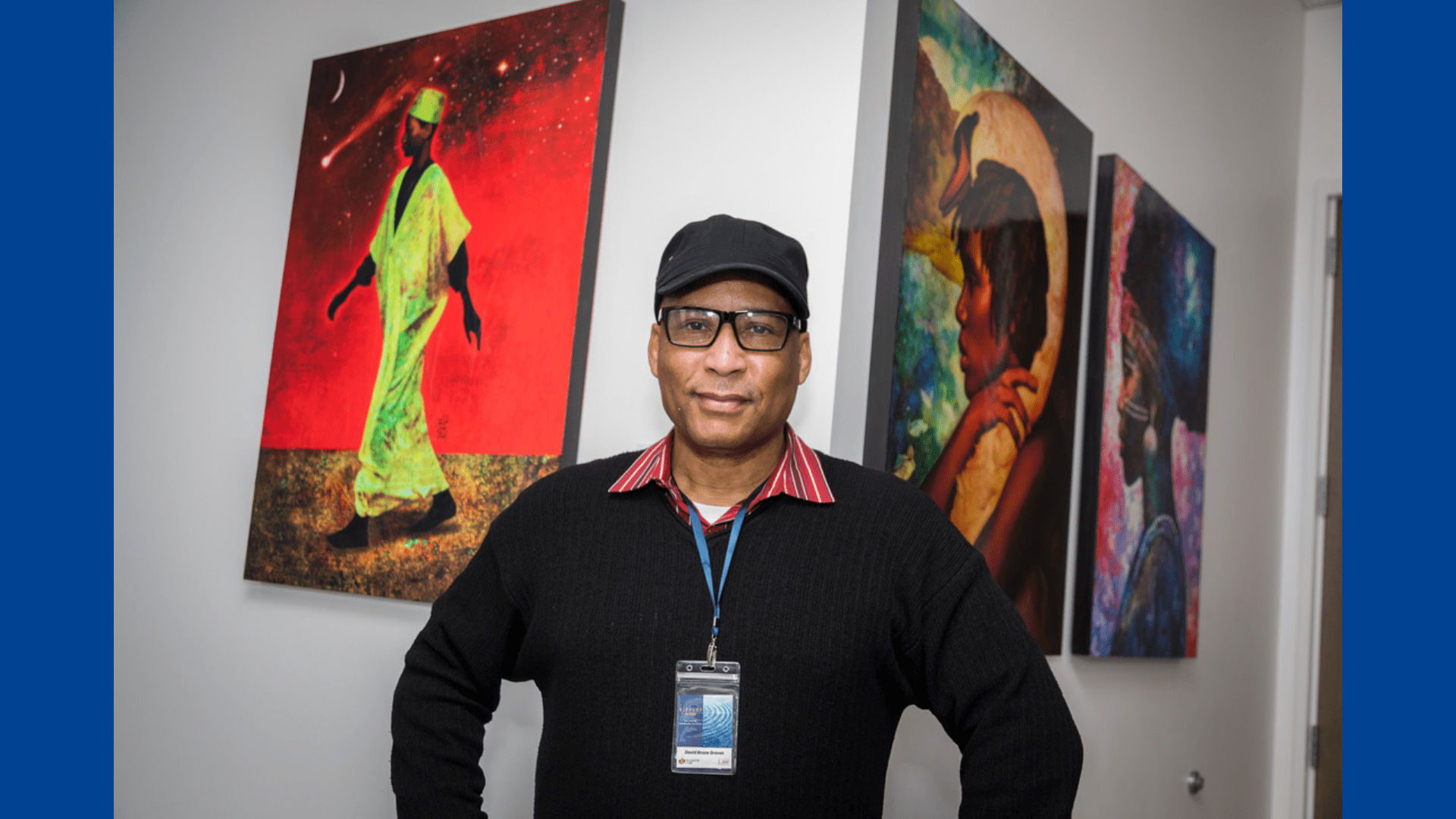

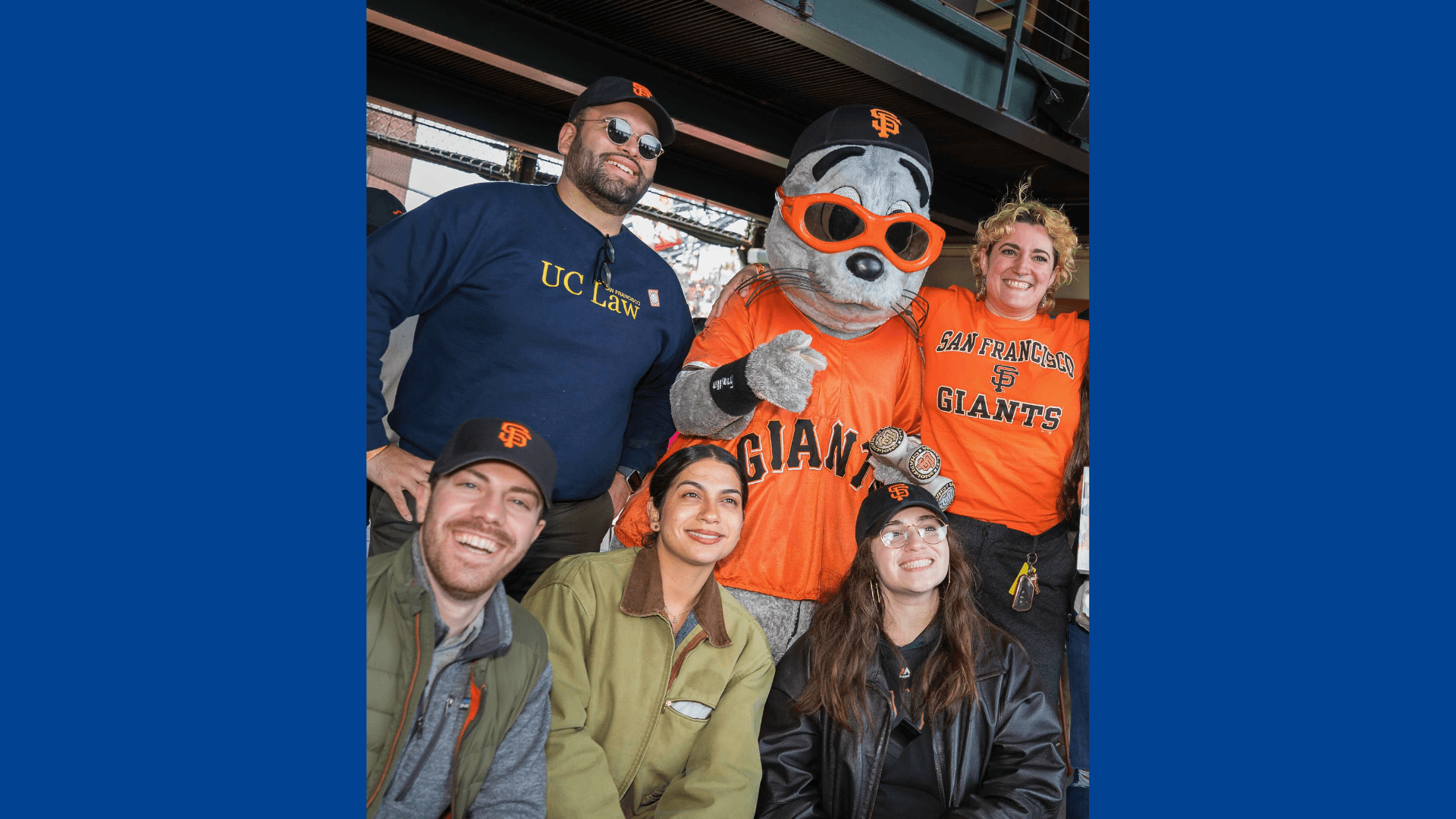

Our Community

Explore our Campus

The Civic Center is home to UC Law SF, as well as City Hall, courthouses, the Bill Graham Auditorium (where Commencement is held!), the opera, the ballet, the symphony, and the Asian Art Museum. In addition to being a place to explore between classes, our proximity to courthouses, government offices, and non-governmental organizations makes our location ideal for students participating in externships. We are also a short walk from diverse and vibrant neighborhoods including Hayes Valley, with its shops and restaurants, and South of Market, home to some of the biggest names in technology. We’re also just a few subway stops away from Union Square, the beautiful Ferry Building with its famous outdoor markets, as well as neighborhoods like the Castro and the Mission.

Featured Events

Fresno Alumnus of the Year 2024 – Richard Aaron ’79

**PLEASE NOTE: DATE CHANGE (to May 2nd) AND LOCATION CHANGE (to Baker Manock and Jensen)** All UC Law SF alumni in the Fresno area are invited to celebrate […]

Mastering the Fundamentals of Mediation Certificate Training

CNDR presents its’ annual 40-hour comprehensive mediation practitioner training. Held completely in-person on the UC Law SF Campus.

International Training Program

September 9-13, 2024 International Training Program: Promoting Mediation Through Private and Court-Connected Mediation Centers The Center for Negotiation and Dispute Resolution (CNDR) at UC Law SF, in partnership with the […]